Technology & Innovation, Quick Credit

ARCH‑AI + Assess‑AI: Helping Hospital Administrators Advance Responsible AI in Medical Imaging

April 22, 2026 - Laura Coombs, Tessa S. Cook, Paige Nierengarten

This is an AHRA Quick Credit article. The corresponding post-test in the AHRA Online Institute is coming soon.

Abstract

Healthcare systems are moving fast to deploy medical imaging AI for triage, detection, workflow orchestration, and reporting support. Yet after initial go‑lives, many organizations discover gaps in governance, acceptance testing, and ongoing performance surveillance, areas where traditional picture archiving and communication systems (PACS), radiology information systems (RIS), and quality infrastructures were never designed to help.

The American College of Radiology (ACR) now offers two complementary resources to close those gaps: the ACR Recognized Center for Healthcare‑AI (ARCH‑AI), a recognition program that operationalizes best practices for safe, effective AI use; and Assess‑AI, a National Radiology Data Registry program that continuously monitors real‑world AI performance and benchmarks it against national peers. Together, these programs provide a life cycle approach to AI management spanning procurement, local validation, deployment, monitoring, and assessment.

This article also examines the governance challenges associated with AI adoption and outlines how structured oversight models can mitigate risk and support sustainable innovation in imaging departments.

Over the past several years, the number and variety of imaging AI products has grown significantly, fueled by maturing methods, clinical demand, and investment. Healthcare systems now face a growing marketplace of FDA‑cleared tools addressing triage, detection, quantification, and workflow orchestration.

However, experience across institutions shows that performance observed in premarket studies may not generalize to local populations, scanners, protocols, and workflows. In fact, published studies document variability in performance across sites and subgroups. These realities underscore the need for structured, locally accountable oversight and continuous evaluation and monitoring1-8.

Regulatory Context

Regulatory agencies have acknowledged that AI presents unique oversight challenges. The FDA has articulated a total product life cycle framework for AI and machine learning Software as a Medical Device (SaMD). This framework emphasizes premarket evaluation, transparency, post-market monitoring, and continuous improvement9.

Although regulatory authorization confirms that a device met defined safety and effectiveness criteria during review, it does not guarantee uniform performance across all clinical environments. Development data sets may not reflect local patient populations or workflow conditions. Therefore, healthcare organizations remain responsible for validating performance within their own settings.

Administrators must understand that regulatory clearance represents one component of oversight rather than a comprehensive assurance of ongoing performance. Structured institutional governance, model selection criteria, local acceptance testing, and ongoing monitoring are all required to bridge the gap between regulatory review and real-world clinical implementation. To support healthcare systems navigating these challenges, the ACR has developed two programs: ARCH-AI and Assess-AI.

Program Overviews: ARCH‑AI and Assess‑AI

ARCH‑AI

ARCH‑AI is the first national AI quality assurance recognition program for radiology facilities. It describes best practices and recognizes organizations that attest to having the appropriate governance, inventory, security, selection, acceptance testing, monitoring, and (where applicable) local model controls in place. Recognition is intended to demonstrate adherence to best practices and build trust with patients, payers, and referring clinicians.

Above, left: ACR Recognized Center for Healthcare-AI (ARCH-AI) badge awarded to organizations that demonstrate adherence to best practices for the governance, implementation, and monitoring of artificial intelligence in imaging interpretation.

Above, right: ACR Assess-AI badge indicating participation in the American College of Radiology’s Assess-AI registry for real-world performance monitoring of clinical imaging AI models.

Key criteria for recognition include:

- Establishing an interdisciplinary AI governance group.

- Maintaining a detailed inventory of AI tools and use cases.

- Ensuring security and compliance checkpoints.

- Performing structured selection and local acceptance testing.

- Monitoring performance and effectiveness.

- Contributing to Assess‑AI for benchmarking.

Governance infrastructure typically includes a multidisciplinary AI oversight committee composed of radiologists, informatics leaders, compliance officers, quality personnel, and information technology representatives. This committee defines evaluation criteria for new AI solutions, reviews vendor validation data, and establishes local testing requirements.

Standardized processes should require documentation of intended use, performance metrics, regulatory status, integration considerations, and model update pathways. Institutions benefit from maintaining formal records of validation procedures and performance thresholds prior to clinical deployment. By formalizing governance structures, ARCH-AI promotes consistency, transparency, and accountability across AI initiatives.

Healthcare systems are already familiar with the ACR framework for advancing quality through accreditation programs across imaging modalities such as CT, MRI, ultrasound, mammography, and nuclear medicine. These programs are grounded in ACR Practice Parameters and Technical Standards, which outline the training, equipment, clinical processes, and quality assurance activities necessary to support safe and effective imaging10.

As AI becomes more integrated into radiology workflows, similar guidance is needed to ensure responsible implementation. Lessons learned from ARCH-AI have also informed the development of a proposed ACR–SIIM Practice Parameter for Imaging Artificial Intelligence, being developed in collaboration with the Society for Imaging Informatics in Medicine to further formalize guidance for the safe use of AI in clinical imaging.

Assess‑AI

The National Radiology Data Registry (NRDR), is a suite of clinical quality registries that enables organizations to benchmark performance, monitor quality metrics, and support continuous improvement through national comparative data. Thousands of imaging facilities already participate in NRDR registries such as the Dose Index Registry, National Mammography Database, and Lung Cancer Screening Registry11. Because many healthcare organizations already have the technical infrastructure and workflows in place to submit data to NRDR, implementing Assess-AI, ACR’s registry for post-deployment monitoring of imaging AI models, builds upon existing processes rather than requiring a completely new reporting framework.

Organizations participating in Assess-AI submit de‑identified AI result outputs, associated radiology reports, and study metadata via ACR Connect. Organizations currently participating in NRDR already have ACR Connect installed or are using its predecessor, TRIAD, which can be easily upgraded.

ACR Connect operates locally at a facility or within a private cloud and enables secure connections to systems such as RIS, PACS, and electronic health record (EHR)12. The platform supports local data processing, distributed/federated processing, and an application-based architecture that allows additional tools and workflows to be deployed as needed.

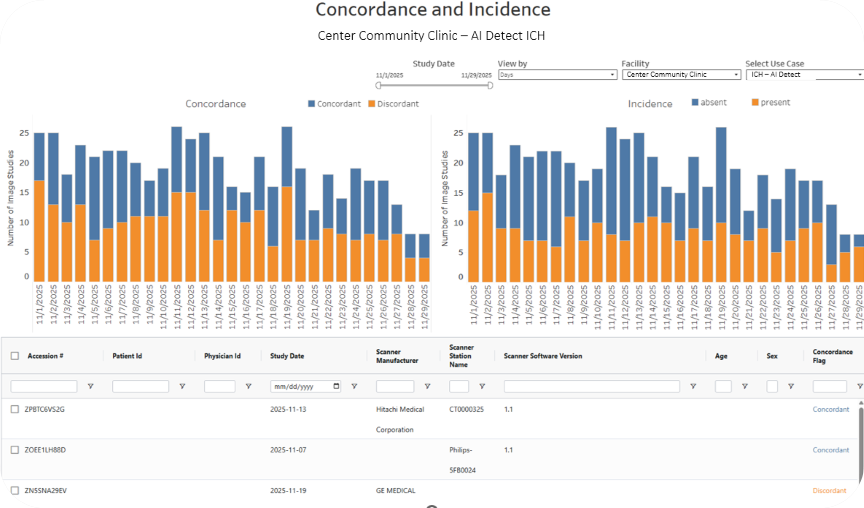

The Assess-AI registry normalizes vendor outputs, extracts surrogate labels from reports using secure large language models prompting, computes concordance/discordance, and returns interactive dashboards for local and national benchmarking (Figure 1, below).

Figure 1. An example facility’s report shows the raw concordance and incidence values for example vendor AI Detect’s ICH model.

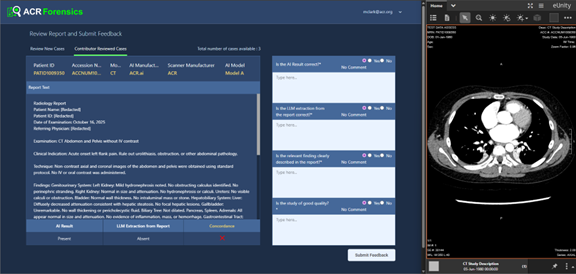

Assess‑AI helps facilities monitor input stability (equipment, protocol, software), track model versioning, trend concordance over time, compare to national peers, and drill into discordant cases for root‑cause review using a local re‑identification workflow and the Forensics App on ACR Connect (Figure 2, below). With the Forensics App, a reviewer can view the imaging study, the radiology report, and the clinical AI outputs to understand why the case is discordant. The reviewer can also submit feedback to Assess-AI and use that knowledge to address the AI performance if needed.

Figure 2. The Forensics App on the local facility Connect node allows a reviewer to adjudicate discordant cases.

Ensuring consistent performance is an important quality requirement. As part of acceptance testing and ongoing monitoring, analysis of subgroup performance (e.g., age, sex, scanner manufacturer) using available metadata and investigation of meaningful gaps will help identify potential inconsistencies in performance. Assess-AI provides dashboards with drill-down reports that enable transparent communication of known limitations to both end users and leadership.

ARCH‑AI and Assess‑AI Work Together

ARCH‑AI defines the organizational processes that make AI safe and sustainable; Assess‑AI supplies the longitudinal monitoring. Organizations cannot manage what they cannot measure.

Practically, this means governance policies require local acceptance testing and continuous monitoring. The Assess-AI registry provides the mechanism for meeting these requirements. The registry provides standardized measures, comparative context, and drill‑down tools. Insights from the registry feed back into training, protocol adjustments, vendor engagement, and, when needed, pause or rollback decisions1, 9, 13.

Risk Management, Documentation, and Legal Readiness

Thorough documentation of governance decisions, validation results, monitoring trends, and corrective actions is central to organizational readiness. This record demonstrates that the organization exercised reasonable care in evaluating, implementing, and maintaining AI tools. Policies should define responsibilities among physicians, administrators, and vendors; articulate escalation and temporary rollback procedures; and require vendor disclosure of significant changes14, 15.

Budgeting and Total Cost of Ownership

ARCH‑AI is structured as a cost‑efficient path to demonstrate responsible practice relative to the operational and reputational risk it mitigates. Assess‑AI leverages the existing NRDR framework11, 16 and ACR Connect17, which can be deployed on a local server or within a private‑cloud footprint.

Ongoing personnel time is concentrated at go‑live and at inflection points, such as scanner upgrades or model version changes, when temporary performance shifts are most likely. Over time, centralized dashboards reduce manual audit burden and support data‑driven vendor and renewal decisions.

Implementation Playbook for Administrators

The criteria for ARCH-AI are intentionally not prescriptive, allowing organizations to customize governance structures and processes to their specific environments. However, the playbook below provides a template for how an AI program might be rolled out.

Build the Governance Foundation

- Charter a cross‑functional AI Oversight Committee to oversee the selection, validation, deployment, monitoring, and decommissioning of AI models.

- Establish a reporting cadence to leadership and quality teams.

- Approve a standard operating procedure covering inventory management, intended‑use documentation, acceptance testing templates, monitoring thresholds, incident response, and vendor change notifications.

Create and Maintain the AI Inventory

- Maintain a living inventory that captures algorithm name and version, indication and triggers, inputs and outputs, integration points, license terms, responsible owner, and implementation status.

Plan and Execute Local Acceptance Testing

- Define appropriate use‑case metrics and minimum performance thresholds; select a representative sample and adjudicate discordances.

- In parallel, confirm user‑facing integration and escalation pathways. Completion of acceptance testing should be a prerequisite for production deployment.

Align with Enterprise Risk, Compliance, and IT

- Coordinate with legal and compliance on business associate agreements, de‑identification, and access controls; with IT security on authentication.

- Define vendor‑notification expectations for algorithm updates and maintain version histories.

Stand Up Data Flows for Monitoring

- Install and configure ACR Connect; establish interfaces from PACS/RIS/reporting and AI platforms (DICOM/DICOMweb, HL7/FHIR, or secure file‑drop) to transmit AI outputs, de‑identified report text, and study metadata.

- Validate de‑identification and local re‑identification crosswalk.

Monitor, Investigate, Improve

- Use Assess‑AI dashboards to review monthly concordance, incidence, and input stability, comparing against peer benchmarks when available.

- Establish thresholds that trigger case review and root‑cause analysis.

- Identify trends linked to scanner software updates, protocol changes, or shifts in prevalence. Capture remediation actions (training, configuration changes, vendor updates).

Communicate and Educate

- Provide end users with transparent briefings on indications, limitations, and expected trade‑offs (e.g., sensitivity versus positive predictive value (PPV) at local prevalence).

- Share dashboard snapshots in section meetings to build trust, celebrate improvements, and highlight lessons learned.

Example Scenarios

Since the launch of the ARCH-AI and Assess-AI programs, ACR has identified some common scenarios for which these resources are helpful.

For example, suppose a scanner software upgrade produced a dip in the concordance rate for a particular AI model. By reviewing Assess‑AI reports and filtering performance by scanner and software version, a change in concordance rate might be observed. After identifying this association, it may be appropriate to discuss potential adjustments with imaging IT and the AI vendor, with all actions and outcomes documented.

Another example might relate to pressure from outside the radiology department to expand off‑label use of an AI model. In this case, the already-established AI inventory could be used to verify intended use and either block the expansion or, if expansion is pursued, treat it as a new use case requiring acceptance testing, monitoring, and other established processes.

Finally, it is common that the prevalence of the FDA-cleared algorithm is higher than the local prevalence. Lower local prevalence would lead to lower PPV than expected based on the FDA-cleared value. In such a case, Assess-AI could be used to determine the prevalence rate and to reset expectations or possibly consider an alternative product. Another option would be to reinforce user education to reduce over-reliance and alert fatigue.

Future Outlook

As imaging AI expands into detection, segmentation, and quantitative longitudinal assessment, and as multimodal and foundation‑model approaches enter clinical workflows, governance and monitoring infrastructure will become even more critical.

The Assess-AI approach provides a scalable way to incorporate new use cases, modalities, and analytic methods while preserving privacy and enabling benchmarking. Investment in ARCH‑AI and Assess‑AI today positions organizations to evaluate emerging tools efficiently and responsibly.

Declaration of generative AI and AI-assisted technologies in the manuscript preparation process: During the preparation of this work, the author used Copilot in order to improve readability, clarity, and grammar. After using this tool/service, the authors reviewed and edited the content as needed and take full responsibility for the content of the published article.

References

- AI Central - Assess AI. [Accessed 11/21/2025]; Available from: https://aicentral.acrdsi.org/Assess-AI.

- Korfiatis, P., et al., Implementing Artificial Intelligence Algorithms in the Radiology Workflow: Challenges and Considerations. Mayo Clin Proc Digit Health, 2025. 3(1): p. 100188.

- Kunst, M., et al., Real-World Performance of Large Vessel Occlusion Artificial Intelligence-Based Computer-Aided Triage and Notification Algorithms-What the Stroke Team Needs to Know. J Am Coll Radiol, 2024. 21(2): p. 329-340.

- Oakden-Rayner, L., et al., Hidden Stratification Causes Clinically Meaningful Failures in Machine Learning for Medical Imaging. Proc ACM Conf Health Inference Learn (2020), 2020. 2020: p. 151-159.

- Recht, M.P., et al., Integrating artificial intelligence into the clinical practice of radiology: challenges and recommendations. Eur Radiol, 2020. 30(6): p. 3576-3584.

- Roberts, M., et al., Common pitfalls and recommendations for using machine learning to detect and prognosticate for COVID-19 using chest radiographs and CT scans. Nature Machine Intelligence, 2021. 3(3): p. 199-217.

- Wynants, L., et al., Prediction models for diagnosis and prognosis of covid-19: systematic review and critical appraisal. BMJ, 2020. 369: p. m1328.

- Zech, J.R., et al., Variable generalization performance of a deep learning model to detect pneumonia in chest radiographs: A cross-sectional study. PLoS Med, 2018.15(11): p. e1002683.

- Allen, B., et al., Evaluation and Real-World Performance Monitoring of Artificial Intelligence Models in Clinical Practice: Try It, Buy It, Check It. J Am Coll Radiol, 2021. 18(11): p. 1489-1496.

- ACR Practice Parameters and Technical Standards. . [Accessed March 12, 2026]; Available from: https://www.acr.org/Clinical-Resources/Clinical-Tools-and-Reference/Practice-Parameters-and-Technical-Standards.

- ACR National Radiology Data Registry (NRDR). [Accessed 11/11/2025]; Available from: https://www.acr.org/Clinical-Resources/Clinical-Tools-and-Reference/Registries.

- Brink, L., et al., ACR's Connect and AI-LAB technical framework. JAMIA Open, 2022. 5(4): p. 1-9.

- AI Central (ACR DataScience Institute). [Accessed January 12, 2026]; Available from: https://aicentral.acrdsi.org/.

- Allen, B., et al., 2020 ACR Data Science Institute Artificial Intelligence Survey. J Am Coll Radiol, 2021. 18(8): p. 1153-1159.

- Babic, B., et al., Algorithms on regulatory lockdown in medicine. Science, 2019. 366(6470): p. 1202-1204.

- NRDR Application Process. [Accessed 11/11/2025]; Available from: https://nrdrsupport.acr.org/support/solutions/articles/11000032665-the-application-process.

- ACR Connect System Requirements. [Accessed 11/11/2025]; Available from: https://acrconnectsupport.acr.org/support/solutions/articles/11000117764-system-requirements-windows-.